In this interconnected and machine-intelligent age, sensors are becoming an increasingly large part of mechanical design. It’s only logical that they would be needed in a simulation as well. Prior to Vortex Studio 2017b, it was already possible to determine whether two objects are colliding within a scene, via a piece of code called an intersect subscriber. However, despite being a powerful tool for monitoring collisions, intersect subscribers are cumbersome to use, as they have no easy means to obtain collision information for specific components of a machine or the environment. That’s where intersection sensors and triggers come in.

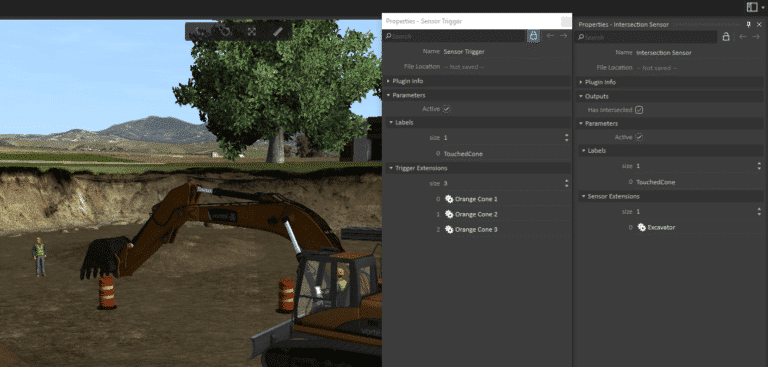

The new Intersection Sensor extension provides an easier way to track intersections between simulation objects. A “sensor” defines where to look, and a “trigger” defines what is being looked for. When there is an intersection between the two, we say that something has triggered the sensor. The Intersection Sensors and Sensor Triggers refer to a list of extensions that provide them the collision geometries to look for. These extensions can be anything, even objects that contain other extensions. They are recursively traversed to find all possible child collidables. Intersection Sensors and Sensor Triggers also include a list of “labels,” which are plain strings defined by the user. If labels are present, only intersections between Sensors and Triggers that share a label will be considered. This is very useful for filtering out uninteresting parts of the world! If no labels are specified on a Sensor, the field is treated as a wildcard that makes the sensor compatible with everything. In this example, a trigger has been set up with the entire scene as the extension, meaning anything will be considered for intersections. The Sensor is set up with the bucket as its extension, and it shares the same label as the trigger. Bumping the bucket into anything in the scene will make the output “Intersection” become “true.” This output can then be connected to a warning indicator or a script for further effects.

In addition to Intersection Sensors, we provide the possibility to do raycasts using the same system that Intersection Sensors use. Raycasts use the same label system, but don’t require a list of extensions. Instead, a starting point in Worldspace is given, along with a direction (also in Worldspace) and a maximum range. Note that, for advanced users, if the Editor’s extension doesn’t provide enough information and the user wants the actual intersection information, it is also possible to access the lower-level API through Python. Please refer to the Vortex Studio documentation.