CM Labs is proud of our level of realism, basing our simulations on engineering properties and accurate physics. Our work on cables and water has made our cranes and marine simulation capabilities second to none. Likewise, to accurately model earthmoving vehicles, the soil they work with needs to behave like soil. Having proper soil simulation in Vortex® Studio not only allows us to get the physical behavior right, but it is also important that it looks right for total operator immersion. Vortex Studio includes a powerful earthwork simulation that uses a hybrid heightfield & particles approach to allow the real-time simulation of soil. Details can be found in the Vortex Studio Theory Guide.

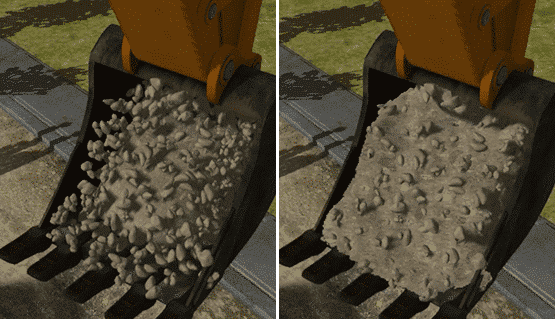

However, this post highlights some ways in which we have updated our soil rendering to allow for more accurate visuals, smaller particle sizes, and proper behavior.

Creating a Proper Soil Surface

In the past, we have used effects such as particle meshes and dusty billboards to render soil volumes in Vortex Studio. This method didn’t feel entirely natural, because the number of particles needed for a truly realistic look required a lot of computing resources. Therefore, in our recent update (Vortex Studio 2018a) we switched to the Screen Space Mesh (SSM) technique— initially developed for fluid rendering—and adapted it for the rendering of opaque textured soil.

In brief, the SSM technique generates a mesh in view space using the depth data of a set of particles. In other words, the depth map of the particles is processed and blurred to recreate a big blob of particles. The normals, which are generated from the depth map, are used for lighting, giving the blob a nice, liquid look.

The actual implementation of the SSM effect was not difficult, although the blurring step did present a significant challenge. However, this happened to be the step that makes the whole thing work, merging the edges of the particles. In the end, we used a two-pass blur, which seemed well-suited for our needs, especially in regards to performance. However, even with an adapted blurring algorithm, the look and feel of the SSM still required work to make it look like real soil.

Improving the SSM Technique

The most significant challenge we had to overcome was the SSM’s fluid-like appearance—fine for water, but not so great with soil. There is a simple reason why SSM is never seen with anything other than a single color; there is no way to map textures on it; the mesh is in screen space, and depth data alone is not enough to position the texture image on the mesh.

The naive approach to solving this was to generate UVs based on the gl_Position shader variable, which contains the position of the pixels on the screen. However, since these positions are in view space, we saw an effect we call “texture swimming,” where the texture ended up following the camera.

To generate world space UV data (data independent from the rotation and position of the camera), we generated a second buffer, along with the depth buffer, to save the UVs of the particles. Since the physics engine does not modify rotations of the particles (for faster performance), we also randomly seeded the UVs associated with each particle to break visual patterns. To simplify and expedite UV generation, particles were converted to something similar to sphere-shaped billboards so that computing depths, normals, and UVs were only simple texture fetches.

At this point, we had to choose whether to generate the normals using the depth buffer or use those of the particles (through yet another buffer). Generating the normals led to some excellent results for fluidic materials. Therefore it was decided to use the particles’ normals instead.

For seamless rendering of soil and rock, we combined mesh instancing and SSM. These instanced meshes were randomly selected original particles used to generate the SSM’s input depth buffer. This allowed us to apply one material per particle geometry instead of one global material for all geometry in the Soil Particles extension. This means that we can, for example, now render rocks into loamy soil.

Optimizing with a Distance-Dependent Blur

Another issue with using the SSM effect is its view dependence. Because of how blurring works, the camera’s distance from the mesh greatly affects the amount of blur that is applied to it. This artifact is not as obvious when using transparent materials (such as water) but, in our case, it became very obvious because we are dealing exclusively with opaque materials.

Our solution was to use a view-dependent kernel which blurs less as the camera is moved away. This is computed using the view distance, screen resolution, and near and far values. Since those values do not change much (in our case), we simply hardcoded values that made sense for us instead of making the blurring steps even more computationally expensive and complex.

SSM is a computationally expensive effect, even if it is more efficient than using billions of particles. The blurring step is by far the most expensive step of the whole effect, and to reduce this cost, we use an interleaved kernel which extends the size of the kernel without adding more texture fetches. There is a side effect in doing so—it adds a few pixels here and there around the mesh—but since we are digging through the soil of similar material, these “rogue” pixels are often not seen.

Conclusion

Working with the SSM was quite an interesting challenge, though good documentation and online examples made the task much simpler for us. As a team, we are quite happy with the overall results and believe we offer one of the most advanced and realistic soil renderings in the simulation industry.

Of course, there is more work to be done on our soil rendering technology. For instance, the merge between the displaced soil and the HeightField/terrain remains obvious, although good art can do a lot to help to alleviate this difference.

We’re working on it!